Catalog

Meyer Sound Laboratories, Inc.

Berkeley, CA 94702

United States

One of the most celebrated musicals of the Broadway season and top contender for multiple Tony Awards, Natasha, Pierre & The Great Comet of 1812 (hereafter abbreviated “The Great Comet”) has earned critical acclaim not only for electrifying performances – notably by Josh Groban as Pierre – but also for the way the performers move freely throughout a set that encompasses nearly the entire audience.

From the outset, The Great Comet was written and designed with audience and performers commingling in a common space. The house is the stage, and vice-versa. Sound design was manageable in the production’s first small venues, but the challenge of keeping amplified voices and instruments – as some orchestra members roam about as well – properly localized in space became increasingly vexing as The Great Comet advanced into larger theatres. When the show prepared to open in Broadway’s expansive Imperial Theatre, sound designer Nicholas Pope was tasked with devising an intricate system that offered a degree of dimensionality and fluidity never before realized on the Great White Way.

A product of Yale University’s esteemed program in theatrical design, Pope has worked as a sound designer for university, regional theatre and cruise ship productions. He served as an associate on two prior productions of The Great Comet, and later became principal designer for the Broadway production.

Nicholas Pope

Nicholas PopeFor those unfamiliar with the show, can you give us some background and outline your involvement?

Pope: Yes, the show started off Broadway in 2012 at Ars Nova in a venue with less than 100 seats. Although the music style required some amplification, the room was small enough so that the direct acoustic energy coming from the performers was sufficient to localize the sound sources reasonably well as they moved around the space. That was largely the case with the tent production in 2013, with about 200 seats, where I came on as an associate. By the time we’d moved to ART in Cambridge, with around 500 seats, localization had become much more of an issue.

And why was the move to Broadway such a challenge? Why did it demand such a sprawling and sophisticated system, and even creation of custom control software?

Pope: The initial intent comes from the artistic end. To me, the actors drive any theatrical piece, and we wanted to ensure that the actors and the audience were always in the same acoustical space so that the audience becomes part of the world of the actors.

I needed the actors to be a real entity to everybody. A disembodied voice doesn’t work. It was important to have that connection with the voice at all times; it’s absolutely critical.

And that’s not easily done with this production in a Broadway theatre?

Pope: It’s a new level of complexities. When you step up to a Broadway scale of theatre, due to sheer size of the space it is obvious you can no longer rely on acoustic energy throughout for localization. Yes, you can rely on acoustic energy for anybody who’s sitting near to a performer, but if you get a few rows back you no longer get that sense of intimacy and connection.

I wonder if you could elaborate on why this is even more difficult that it might seem at first glance.

Pope: Some of the difficulties are obvious. If you want to localize the sound of actors and instrumentalists, throughout the space, not just when standing still but while moving, you need loudspeakers all around, a total of 248 in this case. It also means that live mics are in front of the main loudspeakers at all times, which can be a nightmare in itself.

And then there are the timing issues. The room is large enough so that when two performers are completely across the room from each other, which occurs often, you are out of musical time because that distance is large enough so that you are no longer on beat. Bringing all of those things back together and keeping individual vocalists and instruments in time with each other when they are spread around distant parts of the auditorium was one of the tricks of the show.

And all that perspective changes with location. At one place in the room it is zero time coming off of an instrument that is right beside you, while at another location it is perhaps 70 milliseconds later that information arrives. How do you manage that in the electronic side of things and keep it all organized? That was another trick of the show.

It is mind boggling when you start to think it through. Why did you pick the Meyer Sound D-Mitri® digital audio platform as your primary tool for managing all of this?

Pope: I had worked with D-Mitri and its predecessor, the LCS™ Matrix 3 system, on a number of occasions, and it was pretty much on top of my list the entire time, mainly because it had the largest matrix available at 288 by 288.

So it’s D-Mitri that’s responsible for making sure all the apparent sound sources are right where they need to be, moment by moment?

Pope: Yes, everything goes out through the D-Mitri processing engine. We didn’t have to use all of the 288 ins and outs, but I think we’re pushing 250 on both sides. It’s the backbone of the whole show. It’s a robust system that really sets the standard in its category. Meyer Sound is very much on the leading edge of the trend in this department.

And, on the software side, you are using Meyer Sound’s SpaceMap® for panning – with some custom additions, correct?

Pope: Yes, on one level we are taking heavy, heavy advantage of the mapping capabilities of SpaceMap throughout the show. All localization of sounds runs through the basic cueing architecture of SpaceMap. But we did have to devise a new front-end graphical interface to run it for this show.

And why is that?

Pope: It’s not because there’s anything inherently wrong with SpaceMap’s own interface. I have used it many times, and it works well for basic programmed multi-dimensional panning. It’s perfect for applications like theme parks, spectaculars like Cirque du Soleil, and even most theatre applications. It’s fine if you only have a dozen or two panning trajectories in the show. But when you have hundreds going on, many of them simultaneously, and you need to make on-the-fly modifications in real time, you need something different.

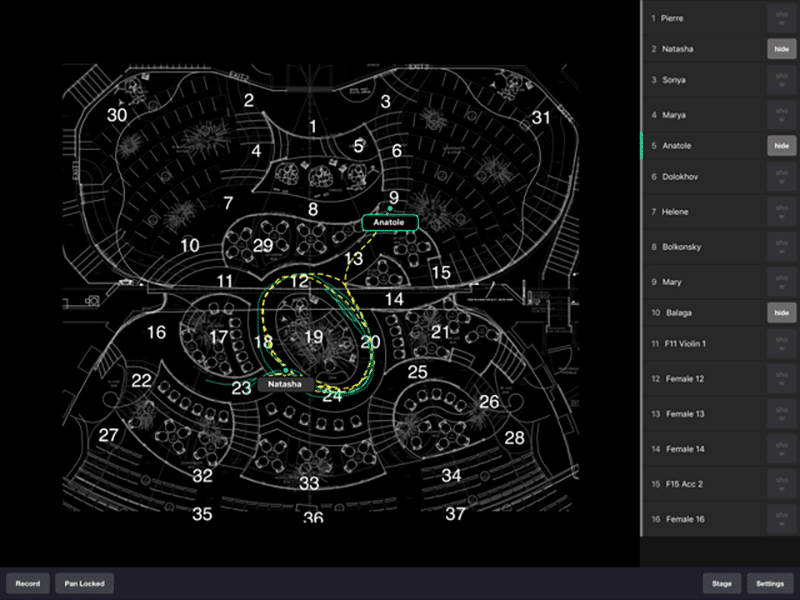

The custom iPad interface for Space Map shows programmed trajectories of performers moving about the set. The “follow sound” operator can make adjustments to the panning matrix in real time on the touchscreen.

The custom iPad interface for Space Map shows programmed trajectories of performers moving about the set. The “follow sound” operator can make adjustments to the panning matrix in real time on the touchscreen.We understand that not only is your D-Mitri system the largest ever used on Broadway, but that yours is the most complex SpaceMap program ever attempted. How do you make it work in the end?